rm -rf `find . -type d -name .svn`

This command will find and delete any directory with the name ".svn".

rm -rf `find . -type d -name .svn`

create or replace

package test_package as

procedure test_procedure(v varchar2);

function test_function(v varchar2) return varchar2;

end test_package;

create or replace

package body package test_package as

procedure test_procedure(v varchar2) is

begin

null;

end;

function test_function(v varchar2) return varchar2 is

begin

return v;

end;

end test_package;

DataSource ds = ...

Connection con = ds.getConnection();

CallableStatement cs =

con.prepareCall("{call test_package.test_procedure(?)}");

cs.setString(1, "Calling test_procedure!");

cs.executeUpdate();

cs.close();

DataSource ds = ...

Connection con = ds.getConnection();

CallableStatement cs =

con.prepareCall("{? = call test_package.test_function(?)}");

cs.registerOutParameter(1, java.sql.Types.VARCHAR);

cs.setString(2, "Calling test_function!");

cs.execute();

String s = cs.getString(1);

cs.close();

DataSource ds = ...

Connection con = ds.getConnection();

PreparedStatement ps =

con.prepareStatement(

"SELECT test_package.test_function(?) S FROM dual"

);

ps.setString(1, "Calling test_function!");

ResultSet r = ps.executeQuery();

String result = null;

if (r.next()) {

result = r.getString("S");

}

con.close();

<?xml version="1.0" encoding="UTF-8"?>

<xs:schema xmlns:xs="http://www.w3.org/2001/XMLSchema"

targetNamespace="http://javaeenotes.blogspot.com/schema"

xmlns:tns="http://javaeenotes.blogspot.com/schema"

elementFormDefault="qualified">

<xs:element name="person">

<xs:complexType>

<xs:sequence>

<xs:element name="name" type="xs:string"/>

<xs:element name="age" type="xs:integer"/>

<xs:element name="birthdate" type="xs:date"/>

</xs:sequence>

</xs:complexType>

</xs:element>

</xs:schema>

<?xml version="1.0" encoding="UTF-8"?>

<tns:person

xmlns:tns="http://javaeenotes.blogspot.com/schema"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation=

"http://javaeenotes.blogspot.com/schema schema.xsd">

<tns:name>Albert</tns:name>

<tns:age>29s</tns:age>

<tns:birthdate>1981-11-19</tns:birthdate>

</tns:person>

package com.javaeenotes;

import java.io.File;

import java.io.IOException;

import javax.xml.XMLConstants;

import javax.xml.parsers.DocumentBuilder;

import javax.xml.parsers.DocumentBuilderFactory;

import javax.xml.parsers.ParserConfigurationException;

import javax.xml.transform.dom.DOMSource;

import javax.xml.validation.Schema;

import javax.xml.validation.SchemaFactory;

import javax.xml.validation.Validator;

import org.w3c.dom.Document;

import org.xml.sax.SAXException;

public class Main {

private static final String SCHEMA = "schema.xsd";

private static final String DOCUMENT = "document.xml";

public static void main(String[] args) {

Main m = new Main();

m.validate();

}

public void validate() {

DocumentBuilderFactory dbf =

DocumentBuilderFactory.newInstance();

dbf.setNamespaceAware(true);

try {

DocumentBuilder parser = dbf.newDocumentBuilder();

Document document = parser.parse(new File(DOCUMENT));

SchemaFactory factory = SchemaFactory

.newInstance(XMLConstants.W3C_XML_SCHEMA_NS_URI);

Schema schema = null;

schema = factory.newSchema(new File(SCHEMA));

Validator validator = schema.newValidator();

validator.validate(new DOMSource(document));

} catch (SAXException e) {

e.printStackTrace();

} catch (IllegalArgumentException e) {

e.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} catch (ParserConfigurationException e) {

e.printStackTrace();

}

}

}

ORA-01461:

can bind a LONG value only for insert into a LONG column

Connection con = ...

String xml = "<test>test</test>";

oracle.sql.CLOB clob = null;

clob = CLOB.createTemporary(con, true,

CLOB.DURATION_SESSION);

clob.open(CLOB.MODE_READWRITE);

clob.setString(1, xml);

clob.close();

clob.freeTemporary();

PrepareStatement s = con.prepareStatement(

"INSERT INTO table (xml) VALUES(XMLType(?))"

);

s.setObject(1, clob);

@WebService

public class WebService {

@Resource

WebServiceContext wsc;

@WebMethod

public String webMethod() {

MessageContext mc = wsc.getMessageContext();

HttpServletRequest req = (HttpServletRequest)

mc.get(MessageContext.SERVLET_REQUEST);

return "Client: " +

req.getRemoteHost() + " (" +

req.getRemoteAddr() + ").";

}

}

package com.javaeenotes;

import javax.annotation.Resource;

import javax.ejb.Stateless;

@Stateless(mappedName = "ejb/ejbEnv")

public class EjbEnv implements EjbEnvRemote, EjbEnvLocal {

@Resource

private String var1;

@Resource

private int var2;

public String getVar1() {

return var1;

}

public int getVar2() {

return var2;

}

}

<?xml version="1.0" encoding="UTF-8"?>

<ejb-jar xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns="http://java.sun.com/xml/ns/javaee"

xmlns:ejb="http://java.sun.com/xml/ns/javaee/ejb-jar_3_0.xsd"

xsi:schemaLocation="http://java.sun.com/xml/ns/javaee

http://java.sun.com/xml/ns/javaee/ejb-jar_3_0.xsd"

version="3.0">

<display-name>env_ejb</display-name>

<enterprise-beans>

<session>

<ejb-name>EjbEnv</ejb-name>

<env-entry>

<env-entry-name>

com.javaeenotes.EjbEnv/var1

</env-entry-name>

<env-entry-type>

java.lang.String

</env-entry-type>

<env-entry-value>

Environment variables from ejb-jar.xml

</env-entry-value>

</env-entry>

<env-entry>

<env-entry-name>

com.javaeenotes.EjbEnv/var2

</env-entry-name>

<env-entry-type>

java.lang.Integer

</env-entry-type>

<env-entry-value>

999

</env-entry-value>

</env-entry>

</session>

</enterprise-beans>

</ejb-jar>

package com.javaeenotes;

import java.io.IOException;

import javax.ejb.EJB;

import javax.naming.Context;

import javax.naming.InitialContext;

import javax.naming.NamingException;

import javax.servlet.ServletException;

import javax.servlet.http.HttpServlet;

import javax.servlet.http.HttpServletRequest;

import javax.servlet.http.HttpServletResponse;

public class WebEnv extends HttpServlet {

@EJB(name="ejb/ejbEnv")

private EjbEnvRemote ejbEnv;

protected void doGet(HttpServletRequest request,

HttpServletResponse response)

throws ServletException, IOException {

try {

Context env = (Context) new InitialContext()

.lookup("java:comp/env");

String s = (String) env.lookup("webVar1");

int i = ((Integer) env.lookup("webVar2")).intValue();

response.getWriter().write(

"webVar1: " + s + "\n");

response.getWriter().write(

"webVar2: " + i + "\n");

response.getWriter().write(

"ejbVar1: " + ejbEnv.getVar1() + "\n");

response.getWriter().write(

"ejbVar2: " + ejbEnv.getVar2() + "\n");

} catch (NamingException e) {

response.getWriter().write("NamingException");

}

}

}

<?xml version="1.0" encoding="UTF-8"?>

<web-app xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xmlns="http://java.sun.com/xml/ns/javaee"

xmlns:web="http://java.sun.com/xml/ns/javaee/web-app_2_5.xsd"

xsi:schemaLocation="http://java.sun.com/xml/ns/javaee

http://java.sun.com/xml/ns/javaee/web-app_2_5.xsd"

id="env_web" version="2.5">

<display-name>env_web</display-name>

<servlet>

<description></description>

<display-name>WebEnv</display-name>

<servlet-name>WebEnv</servlet-name>

<servlet-class>com.javaeenotes.WebEnv</servlet-class>

</servlet>

<servlet-mapping>

<servlet-name>WebEnv</servlet-name>

<url-pattern>/WebEnv</url-pattern>

</servlet-mapping>

<env-entry>

<env-entry-name>webVar1</env-entry-name>

<env-entry-type>java.lang.String</env-entry-type>

<env-entry-value>

Environment variables from web.xml

</env-entry-value>

</env-entry>

<env-entry>

<env-entry-name>webVar2</env-entry-name>

<env-entry-type>java.lang.Integer</env-entry-type>

<env-entry-value>888</env-entry-value>

</env-entry>

</web-app>

webVar1: Environment variables from web.xml

webVar2: 888

ejbVar1: Environment variables from ejb-jar.xml

ejbVar2: 999

<?xml version='1.0' encoding='UTF-8'?>

<deployment-plan xmlns="http://xmlns.oracle.com/weblogic/deployment-plan"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation=

"http://xmlns.oracle.com/weblogic/deployment-plan

http://xmlns.oracle.com/weblogic/deployment-plan/1.0/deployment-plan.xsd"

global-variables="false">

<application-name>env_ear</application-name>

<variable-definition>

<variable>

<name>WebEnv_Var1</name>

<value>NEW environment variables from web.xml</value>

</variable>

<variable>

<name>WebEnv_Var2</name>

<value>800</value>

</variable>

<variable>

<name>EjbEnv_Var1</name>

<value>NEW environment variables from ejb-jar.xml</value>

</variable>

<variable>

<name>EjbEnv_Var2</name>

<value>900</value>

</variable>

</variable-definition>

<module-override>

<module-name>env_ear.ear</module-name>

<module-type>ear</module-type>

<module-descriptor external="false">

<root-element>weblogic-application</root-element>

<uri>META-INF/weblogic-application.xml</uri>

</module-descriptor>

<module-descriptor external="false">

<root-element>application</root-element>

<uri>META-INF/application.xml</uri>

</module-descriptor>

<module-descriptor external="true">

<root-element>wldf-resource</root-element>

<uri>META-INF/weblogic-diagnostics.xml</uri>

</module-descriptor>

</module-override>

<module-override>

<module-name>env_ejb.jar</module-name>

<module-type>ejb</module-type>

<module-descriptor external="false">

<root-element>weblogic-ejb-jar</root-element>

<uri>META-INF/weblogic-ejb-jar.xml</uri>

</module-descriptor>

<module-descriptor external="false">

<root-element>ejb-jar</root-element>

<uri>META-INF/ejb-jar.xml</uri>

<variable-assignment>

<name>EjbEnv_Var1</name>

<xpath>/ejb-jar/enterprise-beans/session/

[ejb-name="EjbEnv"]/env-entry/

[env-entry-name="com.javaeenotes.EjbEnv/var1"]/

env-entry-value</xpath>

<operation>replace</operation>

</variable-assignment>

<variable-assignment>

<name>EjbEnv_Var2</name>

<xpath>/ejb-jar/enterprise-beans/session/

[ejb-name="EjbEnv"]/env-entry/

[env-entry-name="com.javaeenotes.EjbEnv/var2"]/

env-entry-value</xpath>

<operation>replace</operation>

</variable-assignment>

</module-descriptor>

</module-override>

<module-override>

<module-name>env_web.war</module-name>

<module-type>war</module-type>

<module-descriptor external="false">

<root-element>weblogic-web-app</root-element>

<uri>WEB-INF/weblogic.xml</uri>

</module-descriptor>

<module-descriptor external="false">

<root-element>web-app</root-element>

<uri>WEB-INF/web.xml</uri>

<variable-assignment>

<name>WebEnv_Var1</name>

<xpath>/web-app/env-entry/

[env-entry-name="webVar1"]/

env-entry-value</xpath>

<operation>replace</operation>

</variable-assignment>

<variable-assignment>

<name>WebEnv_Var2</name>

<xpath>/web-app/env-entry/

[env-entry-name="webVar2"]/

env-entry-value</xpath>

<operation>replace</operation>

</variable-assignment>

</module-descriptor>

</module-override>

<config-root></config-root>

</deployment-plan>

<variable-definition>

<variable>

<name>WebEnv_Var1</name>

<value>NEW environment variables from web.xml</value>

</variable>

<variable>

<name>WebEnv_Var2</name>

<value>800</value>

</variable>

<variable>

<name>EjbEnv_Var1</name>

<value>NEW environment variables from ejb-jar.xml</value>

</variable>

<variable>

<name>EjbEnv_Var2</name>

<value>900</value>

</variable>

</variable-definition>

<variable-assignment>

<name>WebEnv_Var2</name>

<xpath>/web-app/env-entry/

[env-entry-name="webVar2"]/

env-entry-value</xpath>

<operation>replace</operation>

</variable-assignment>

webVar1: NEW environment variables from web.xml

webVar2: 800

ejbVar1: NEW environment variables from ejb-jar.xml

ejbVar2: 900

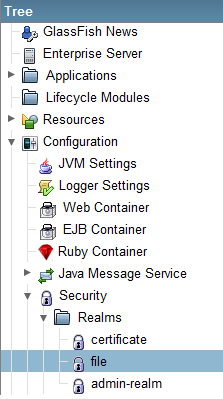

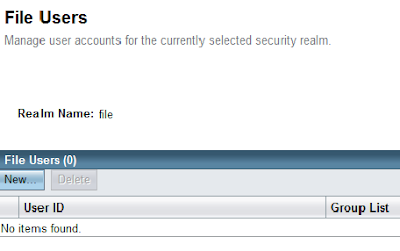

<realm

className="org.apache.catalina.realm.MemoryRealm"

digest="" pathname="conf/users.xml" />

<tomcat-users>

<user name="john"

password="secret"

roles="user" />

<user name="peter"

password="secret"

roles="admin" />

<user name="carol"

password="secret"

roles="role,role2" />

</tomcat-users>

<!-- Security constraint for resource only accessible to role -->

<security-constraint>

<display-name>WebServiceSecurity</display-name>

<web-resource-collection>

<web-resource-name>Authorized users only</web-resource-name>

<url-pattern>/ExampleWSService</url-pattern>

<http-method>POST</http-method>

</web-resource-collection>

<auth-constraint>

<role-name>user</role-name>

</auth-constraint>

</security-constraint>

<!-- BASIC authorization -->

<login-config>

<auth-method>BASIC</auth-method>

</login-config>

<!-- Definition of role -->

<security-role>

<role-name>user</role-name>

</security-role>

<security-role-mapping>

<role-name>user</role-name>

<group-name>wsusers</group-name>

</security-role-mapping>

public void start() {

// This statement is not needed when run in container.

service = new ExampleWSService();

ExampleWS port = service.getExampleWSPort();

// Authentication

BindingProvider bindProv = (BindingProvider) port;

Map<String, Object> context = bindProv.getRequestContext();

context.put("javax.xml.ws.security.auth.username", "peter");

context.put("javax.xml.ws.security.auth.password", "qwerty1");

System.out.println(port.greet("Peter"));

System.out.println(port.multiply(3, 4));

}

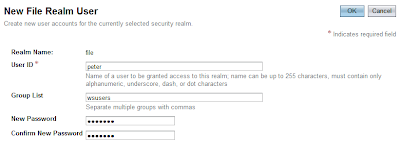

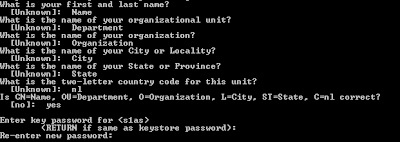

keytool -export -alias s1as -keystore keystore.jks

-storepass changeit -file server.cer

keytool -import -v -trustcacerts -alias s1as -keypass changeit

-file ../server/server.cer -keystore client_cacerts.jks

-storepass changeit

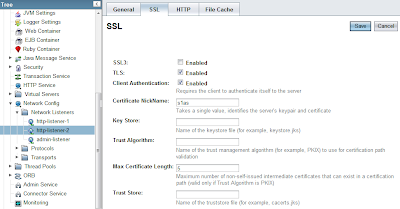

<user-data-constraint>

<transport-guarantee>CONFIDENTIAL</transport-guarantee>

</user-data-constraint>

https://localhost:8181/webservice/ExampleWSService?wsdl

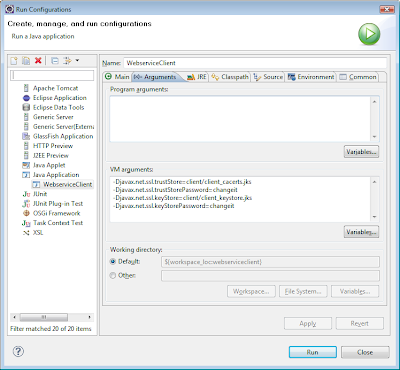

-Djavax.net.ssl.trustStore=client/client_cacerts.jks

-Djavax.net.ssl.trustStorePassword=changeit

keytool -genkey -alias client -keypass changeit

-storepass changeit -keystore client_keystore.jks

keytool -export -alias client -keystore client_keystore.jks

-storepass changeit -file client.cer

keytool -import -v -trustcacerts -alias client

-keystore cacerts.jks -keypass changeit

-file ../client/client.cer

<sun-web-app>

<security-role-mapping>

<role-name>user</role-name>

<!-- <group-name>wsusers</group-name> -->

<principal-name>CN=Name, OU=Department,

O=Organization, L=City, ST=State,

C=nl</principal-name>

</security-role-mapping>

</sun-web-app>

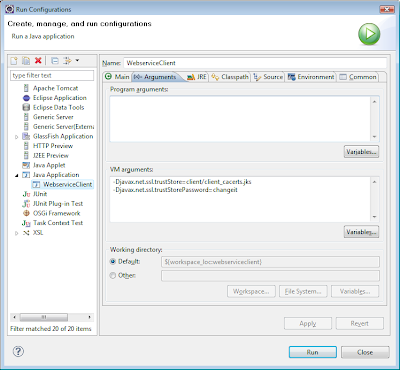

-Djavax.net.ssl.keyStore=client/client_keystore.jks

-Djavax.net.ssl.keyStorePassword=changeit

wsimport -s src

-d bin

http://localhost:8080/webservice/ExampleWSService?wsdl

package com.javaeenotes;

import javax.xml.ws.WebServiceRef;

public class WebserviceClient {

// This annotation only has effect in a container.

@WebServiceRef(

wsdlLocation =

"http://127.0.0.1:8080/webservice/ExampleWSService?wsdl"

)

private static ExampleWSService service;

public static void main(String[] args) {

WebserviceClient wsc = new WebserviceClient();

wsc.start();

}

public void start() {

// This statement is not needed when run in container.

service = new ExampleWSService();

ExampleWS port = service.getExampleWSPort();

System.out.println(port.greet("Peter"));

System.out.println(port.multiply(3, 4));

}

}

package com.javaeenotes;

import javax.jws.WebMethod;

import javax.jws.WebService;

@WebService

public class ExampleWS {

@WebMethod

public int sum(int a, int b) {

return a + b;

}

@WebMethod

public int multiply(int a, int b) {

return a * b;

}

@WebMethod

public String greet(String name) {

return "Hello " + name + "!";

}

}

wsgen -classpath build/classes/ -wsdl

-r WebContent/WEB-INF/wsdl -s src

-d build/classes/ com.javaeenotes.ExampleWS

Log log = Logger.getLogger(Foo.class);

package test;

import org.apache.log4j.Logger;

public class LogMain {

private Logger log;

public static void main(String[] args) {

LogMain app = new LogMain();

app.run();

}

public LogMain() {

System.out.print("Application started.\n");

this.log = Logger.getLogger(LogMain.class);

}

public void run() {

this.log.trace("TRACE message!");

this.log.debug("DEBUG message!");

this.log.info("INFO message!");

this.log.warn("WARN message!");

this.log.error("ERROR message!");

this.log.fatal("FATAL message!");

}

}

log4j.rootLogger=INFO, CONSOLE, FILE

log4j.appender.FILE=org.apache.log4j.DailyRollingFileAppender

log4j.appender.FILE.File=logs/app.log

log4j.appender.FILE.datePattern=yyyyMMDD.

log4j.appender.FILE.Append=true

log4j.appender.FILE.layout=org.apache.log4j.PatternLayout

log4j.appender.FILE.layout

.conversionPattern=%d{HH:mm:ss:SSS} - %p - %C{1} - %m%n

log4j.appender.CONSOLE=org.apache.log4j.ConsoleAppender

log4j.appender.CONSOLE.layout=org.apache.log4j.PatternLayout

log4j.appender.CONSOLE.layout

.conversionPattern=%d{HH:mm:ss:SSS} - %p - %C{1} - %m%n

<?xml version="1.0" encoding="UTF-8"?>

<!DOCTYPE log4j:configuration SYSTEM "log4j.dtd">

<log4j:configuration

xmlns:log4j="http://jakarta.apache.org/log4j/">

<appender name="file"

class="org.apache.log4j.DailyRollingFileAppender">

<param name="file" value="logs/app.log" />

<param name="datePattern" value="yyyyMMDD." />

<param name="append" value="true" />

<layout class="org.apache.log4j.PatternLayout">

<param name="ConversionPattern"

value="%d{HH:mm:ss:SSS} - %p - %C{1} - %m%n" />

</layout>

</appender>

<appender name="console"

class="org.apache.log4j.ConsoleAppender">

<layout class="org.apache.log4j.PatternLayout">

<param name="ConversionPattern"

value="%d{HH:mm:ss:SSS} - %p - %C{1} - %m%n" />

</layout>

</appender>

<root>

<priority value="info" />

<appender-ref ref="console" />

<appender-ref ref="file" />

</root>

</log4j:configuration>

19:23:54:938 - WARN - LogMain - WARN message!

SELECT col1, col2

FROM example_table

WHERE col2 = 'some_condition'

ORDER BY col1 DESC

SELECT *

FROM (SELECT r.*, ROWNUM AS row_number

FROM (SELECT col1, col2

FROM example_table

WHERE col2 = 'some_condition'

ORDER BY col1 DESC) r)

WHERE row_number >= 201 AND row_number <= 300;

ORA-01650: Unable to extend rollback segment RBS6

by %s in tablespace ROLLBACKSEGS

SELECT * FROM dba_rollback_segs;

SELECT * FROM dba_data_files;

ALTER DATABASE DATAFILE 'D:\ORACLE\ROLLBACKSEGS01.DBF'

RESIZE 1024m;

ALTER DATABASE DATAFILE 'C:\ORACLE\ROLLBACKSEGS01.DBF'

AUTOEXTEND ON NEXT 256m MAXSIZE 2048m;

<% response.setContentType("text/plain"); %>

:%s/^V^M//g

// Get the request-object.

HttpServletRequest request = (HttpServletRequest)

(FacesContext.getCurrentInstance().

getExternalContext().getRequest());

// Get the header attributes. Use them to retrieve the actual

// values.

request.getHeaderNames();

// Get the IP-address of the client.

request.getRemoteAddr();

// Get the hostname of the client.

request.getRemoteHost();

create sequence ATABLE_SEQ

minvalue 1

maxvalue 999999999999999999999999999

start with 1

increment by 1

cache 20;

create or replace trigger ATABLE_TRIG

before insert on ATABLE

for each row

begin

select atable_seq.nextval into :new.id from dual;

end;

Hashtable<String, String> env = new Hashtable<String, String>();

env.put(Context.INITIAL_CONTEXT_FACTORY,

"com.sun.jndi.ldap.LdapCtxFactory");

env.put(Context.SECURITY_AUTHENTICATION, "simple");

env.put(Context.SECURITY_PRINCIPAL, "<user>");

env.put(Context.SECURITY_CREDENTIALS, "<password>");

env.put(Context.PROVIDER_URL, "ldap://<host>:389");

env.put(Context.REFERRAL, "follow");

// We want to use a connection pool to

// improve connection reuse and performance.

env.put("com.sun.jndi.ldap.connect.pool", "true");

// The maximum time in milliseconds we are going

// to wait for a pooled connection.

env.put("com.sun.jndi.ldap.connect.timeout", "300000");

SearchControls searchCtls = new SearchControls();

// We start our search from the search base.

// Be careful! The string needs to be escaped!

String searchBase = "dc=company,dc=com";

// There are different search scopes:

// - OBJECT_SCOPE, to search the named object

// - ONELEVEL_SCOPE, to search in only one level of the tree

// - SUBTREE_SCOPE, to search the entire subtree

searchCtls.setSearchScope(SearchControls.SUBTREE_SCOPE);

// We only want groups, so we filter the search for

// objectClass=group. We use wildcards to find all groups with the

// string "<group>" in its name. If we want to find users, we can

// use: "(&(objectClass=person)(cn=*<username>*))".

String searchFilter = "(&(objectClass=group)(cn=*<group>*))";

// We want all results.

searchCtls.setCountLimit(0);

// We want to wait to get all results.

searchCtls.setTimeLimit(0);

// Active Directory limits our results, so we need multiple

// requests to retrieve all results. The cookie is used to

// save the current position.

byte[] cookie = null;

// We want 500 results per request.

ctx.setRequestControls(

new Control[] {

new PagedResultsControl(500, Control.CRITICAL)

});

// We only want to retrieve the "distinguishedName" attribute.

// You can specify other attributes/properties if you want here.

String returnedAtts[] = { "distinguishedName" };

searchCtls.setReturningAttributes(returnedAtts);

// The request loop starts here.

do {

// Start the search with our configuration.

NamingEnumeration<SearchResult> answer = ctx.search(

searchBase, searchFilter, searchCtls);

// Loop through the search results.

while (answer.hasMoreElements()) {

SearchResult sr = answer.next();

Attributes attr = sr.getAttributes();

Attribute a = attr.get("distinguishedName");

// Print our wanted attribute value.

System.out.println((String) a.get());

}

// Find the cookie in our response and save it.

Control[] controls = ctx.getResponseControls();

if (controls != null) {

for (int i = 0; i < controls.length; i++) {

if (controls[i] instanceof

PagedResultsResponseControl) {

PagedResultsResponseControl prrc =

(PagedResultsResponseControl) controls[i];

cookie = prrc.getCookie();

}

}

}

// Use the cookie to configure our new request

// to start from the saved position in the cookie.

ctx.setRequestControls(new Control[] {

new PagedResultsControl(500,

cookie,

Control.CRITICAL) });

} while (cookie != null);

// We are done, so close the Context object.

ctx.close();

String dN = "CN=JohnDoe,OU=Users,DC=company,DC=com";

Attributes answer = ctx.getAttributes(dN);

for (NamingEnumeration<?> ae = answer.getAll(); ae.hasMore();) {

Attribute attr = (Attribute) ae.next();

String attributeName = attr.getID();

for (NamingEnumeration<?> e = attr.getAll(); e.hasMore();) {

System.out.println(attributeName + "=" + e.next());

}

}

cd project

git init

git add .

git commit

cd <path_to_central_directory>

mkdir project

cd project

git init --bare

git remote add origin <path_to_central_directory>/project

git push origin master

git clone <path_to_central_directory>/project

git add file

git commit

git push [origin master]

git fetch

git merge HEAD

git pull origin master

git branch new_version

git branch

git checkout new_version

git merge new_version